So let’s talk about the migration of my homelab from NSX-V to NSX-T. For the faint of heart, this is a daunting tale. Brought to you by the NSX vSwitch, and a limited number of physical NICs in my ESXi hosts.

Here’s the deal – a physical NIC (vmnic) in ESXi can be owned by exactly one vSwitch. That vSwitch can be a vSphere Standard Switch, a vSphere Distributed Switch, or an NSX vSwitch. But only by one of them at any given time.

So your host has to have at least one unused vmmic to be assigned to the NSX vSwitch during implementation. If you’ve got more than two attached to any given vSwitch, you’re fine, since you can move devices around without creating a single point of failure.

But what if your hosts were built with 4 pNICs, and you have 2 for infrastructure traffic (managment, vMotion, vSAN, etc) and 2 for virtual machine traffic? That’s still not so bad, as you can deploy NSX during a maintenance window, take a NIC away from VM traffic, and be prepared for the jeopardy state you’ve put yourself in.

How about a worst-case scenario – hypervisors with only a pair of pNICs installed. Maybe you have blade servers. Maybe you have rack servers with a pair of 25 Gbps or 40 Gbps NICs. That’s an easy implementation for NSX-V, since it simply leverages the vSphere Distributed Switch for its logical switches. It’s not so simple on NSX-T, since we’d have to pull a NIC to assign to the NSX vSwitch, and then what? With only two NICs, there’s no redundancy. This, in the modern data center, is sort of a party foul.

This is the situation I find myself in. Only one of my ESXi hosts has more than two available NICs. So I had to read the NSX-T docs to find out more about the ability to move VMkernel ports to Logical Switches. This feature was introduced as API-only in NSX-T 2.1. 2.2 brings a UI element to the table.

VMkernel ports on Logical Switches? That’s crazy talk! Isn’t that going to create some kind of horrible circular dependency? Well, yes, it would if those Logical Switches were overlay switches backed by Geneve.

But you have to remember that NSX-T can support both Geneve-backed Logical Switches and VLAN-backed Logical Switches. Think about it like this: on any other vSwitch, Port Groups are created, and one of the port group policies available is VLAN Tagging. All a VLAN-backed Logical Switch really is, is a Port Group on the NSX vSwitch with the VLAN Tagging policy set. It’s no more complicated than that.

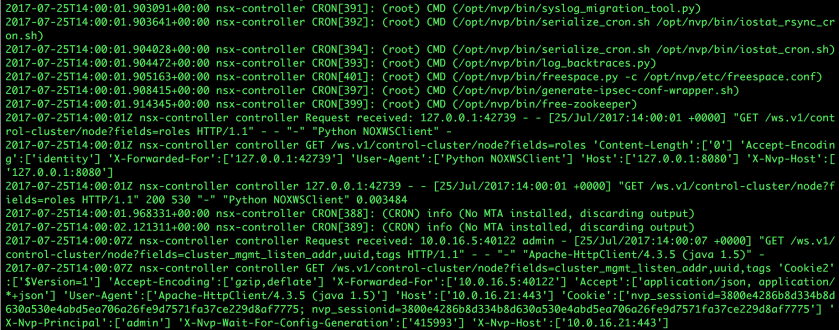

So how did I accomplish this crazy migration? Being my home lab, I had some flexibility in shutting down all of my VMs, so I powered everything down. I had to think about how to do that well, though, as my nested environments use storage from a DellEMC Unity VSA, and my DNS server is a virtual machine. As is vCenter Server, and NSX Manager for the physical infrastructure. I temporarily dumped all of the NICs for the VMs on some distributed port groups to get everything off the logical switches. After juggling DNS, vCenter, and NSX Manager around a bit, I unprepared my management cluster, then unprepared the compute cluster, and finally the old T5610 that I’ve kept around for some extra resources. I then followed these instructions from vswitchzero.com to make sure everything was gone, including the vSphere Web Client plugin. There may still be some vSphere Client plugin baggage hanging around, but I didn’t worry too much about that.

I was about to cross the point of no return. I took a few minutes to breathe, and to review Migrating Network Interfaces from a VSS Switch to an N-VDS Switch in the NSX-T documentation, only to realize that I needed the titular vSphere Standard Switch, and not the vSphere Distributed Switch I had my endive environment running on. So I jumped in, and built out a vSphere Standard Switch infrastructure to support my lab environment. I’d like to say that I was awesome and whipped out a quick PowerShell or Python script to knock that out for me, but I’ll admit that I did it the old-fashioned, brute force way of clicking my way, host by host, through the vSphere Client. Good thing I only have 5 hosts and 4 networks that live outside of NSX. Once everything was migrated to a single standard switch (with a single uplink attached), I tore down the vDS in my lab, deleted my old NSX manager, and started deploying NSX-T 2.2.

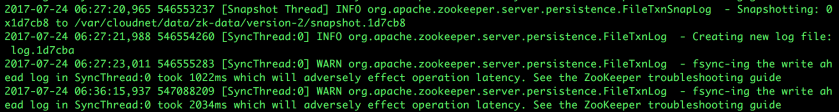

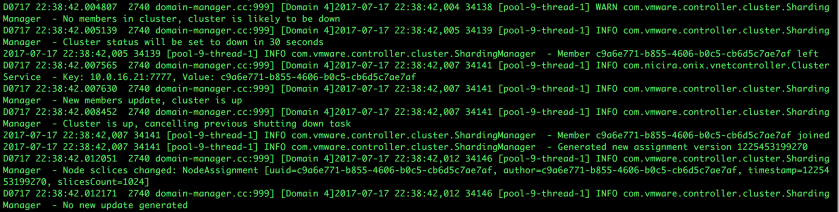

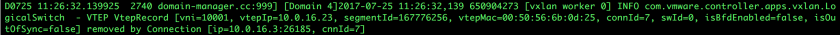

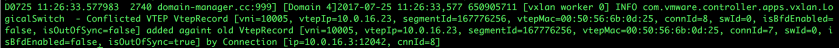

I’d like to say the story is over there, but it’s not. I prepared all of my hosts as Transport Nodes (can you see where this is going yet?), then migrated all of my management VMkernel ports over to a VLAN Logical Switch. No sweat, until I went ahead and migrated the remaining uplinks to the N-VDS. That may seem innocent enough, but I hadn’t moved my NSX Manager over to the N-VDS. So there I am, with NSX Manager hanging off what is now an internal virtual switch, just chilling out with no one but the Controllers to talk to. Realizing my conundrum, I tried to move the virtual machine over to the logical switch hosting my management network, only to realize that it doesn’t show up in the list of networks to which I can connect the vNIC. So I scrambled to move the NSX Manager to a host outside my management cluster (good thing I attacked that cluster first, I suppose), and proceeded to reverse the migration process. At this point, the two hosts in my management cluster are happily chugging along with their two uplinks attached to a vSphere Standard Switch.

Which is actually better, because my management cluster also hosts my edges, and those need to connect to both my transport network, and a VLAN network, both of which are configured on my vSwitch.

Looking back at the trouble I’d gotten myself into, it makes a ton of sense to not allow NSX Manager on an N-VDS, because who wants to be in circular dependency hell? Some things you just have to learn the hard way.

With all that said, everything else has gone smoothly. Mostly. I still need to do some digging to figure out why Mac Learning isn’t doing me any good for my nested ESXi hosts on my logical switches. I’m sure I’m missing a quick API-only configuration switch or something. But routing works in and out of the nested environment (I can at least get my nested vCenter and other virtual machines that aren’t ESXi hosts.

So, smooth migration? Not exactly.

Lessons learned? Lots. For example, planning is crucial. I know this. Hell, I preach this in my classes. But, as my RN wife keeps telling me about her time as an ER nurse, “bad things only happen to other people”. I still tell her to be careful, because they do happen to other people, until you _are_ other people. In my lab, I was “other people”. Fortunately, it’s a lab. The most I would have been out is about 2 days of manually rebuilding stuff from vCenter up if things had gone any more sideways. But what if this was a production environment? That was way too big a risk to take without writing up the migration plan, test plan, and testing in a lab first. I just jumped in, because I’m an instructor, and I get to live in my technical ivory tower devoid of maintenance windows and serious consequences.

Also learned – leave your management cluster alone. All too often, we forget that management is actually a pretty important workload category.

All in all, it’s done. I’m glad I did it, if nothing else for the experience of doing it. And I got to tear down an entire nested NSX-T environment, so I freed up a ton of resources that I don’t have (my hosts live in a perpetual state of red memory alerts). NSX-T is a big workload, even in a small lab environment.

And, my favorite part of the whole migration? I can now manage my entire lab from my Linux box. No more flash for me. Well, mostly. The vSphere Client in 6.7 does enough that I don’t have to fire up the Flash-based Web Client on the Mac very often at all. I haven’t in at least 3 weeks. And I’m ok with that. Maybe when the next version of vSphere drops, I’ll be able to uninstall Flash Player from my Mac.